Sustainable Synthetics & Inoculated Minds | IMPACT ASSESSMENT

3/5 A framework for expanding our understanding of generative AI's impact on the media ecosystem

"A new medium is never an addition to an old one, nor does it leave the old one in peace. It never ceases to oppress the older media until it finds new shapes and positions for them.”

- Marshall McLuhan, 1967

Assessment Framework

Throughout its existence, the media ecosystem has continually evolved and been shaped by new technologies for creation and transmission. Technologies capable of profound systemic disruption are typically greeted with suspicion, debate and caution upon emergence. Given the ethos driving most of the innovation in generative AI is about asking for forgiveness rather than permission, there is an inevitable “dark valley of hidden harms” (Rose-Rockwell, 2023) to pass through ahead of a plausible, eventual correction.

Multiple examples of new communication and creation tools have diversified expressive and creative output. Some of these comfortably co-exist with legacy formats (e.g. painting and photography), while others augmented (e.g. analogue instruments and synthesisers) or cannibalised them altogether (e.g. streaming platforms and CDs / DVDs).

The length and depth of the so-called ‘dark valley of harms’, as well as the extent to which co-existence between legacy and synthetic media is possible, depends on four interrelated factors:

Regulatory Activities

What are the terms for development, deployment & consumer protection?

Sociocultural Currents

What are the assumed positions, and how will they affect people's attitudes and opinions?

Consumer Adoption

What factors shape imminent and future behaviours (incl. media diets)?

Business Practices

What will drive adoption & innovation?

There is a tendency by domain experts or specialist media / commentators to go singular or narrow on the subject of impact - which has its need and merit, but risks ignoring the interconnectedness between the aforementioned factors. For this and many other reasons, exploring generative AI’s impact in multiple domains and through multiple lenses (ergo holistically) felt timely. By taking a more comprehensive approach and accounting for multiple connections, we can make more effective decisions and contribute to more equitable outcomes.

The factors we’ll drill into are by no means definitive - they should ideally be couched in wider economic, political / geopolitical, environmental, and technological developments.

Let us at least make a start by analysing the factors that have the most immediate impact.

TL;DR

Regulation: Current lack of effective action around digital communications and media is an obstacle to more comprehensive synthetic media regulations (which, ideally, would include media psychology / neuroscience guidance).

Sociocultural Currents: Fragmented attitudes span resistance, caution, and celebration, while pop culture repeatedly demonstrates its ability to sway opinion and catalyse regulatory and consumer action.

Consumer Habits: Media diets are on track to become even more individualistic (customisable synth media, chatbots), while financial means for access will play a key role in influencing choice between ‘organic’ and ‘ultra-processed’ media.

Business Practices: Low-cost, free, high-volume media are adopting synthetic content at a vastly higher volume than premium media, while chatbot interfaces and robotics prompt a re-think of existing distribution structures and survival tactics.

Regulatory Activities

An acute awareness of social media’s legacy has catalysed debate and regulatory action around generative AI earlier than perhaps anticipated. In parallel to the technology’s rapid deployment, most major governments have avidly been drafting positions, policies and harm mitigation tactics.

Balancing consumer protections with each nation’s or union’s desire for competitiveness and economic gains, regulatory efforts thus far have predominantly focussed on foundation models (training data, labour, environmental impacts, risks). Any synthetic media-specific guidance to date is limited to mis- and disinformation threats (particularly in advertising and political communications), manifesting in proposals for IP- and likeness protections / restrictions and disclosure via labelling—a start, at least. Tackling the ecosystem, capabilities and business practices behind generative AI tools built on top of foundation models and the synthetic media they can produce would ideally be next in line.

Yet given that most (at least Western) nations lack adequate digital communications and media regulation to begin with, comprehensive guidance and prospective interventions regarding synthetic content and the tools facilitating them may not be given immediate or adequate attention.

Social platforms are often too slow to act (if at all) when content violates guidance on copyright, violence, hate speech, likeness protections or factual incorrectness, and are far from agreeing on a universal standard on content moderation (as Taylor Swift’s explicit deepfakes’ vast reach, mainly via their circulation on X, demonstrated). Speed of travel and the refuge of anonymity have repeatedly been a source of blame for the ineffectiveness of interventions, even with moderation and self-policing mechanics in place. How will the ecosystem fare once generative AI tools increase velocity and the ease of forging alternative identities?

The line between benefit and overreach is difficult to define when it must appease a broad spectrum of positions concerning free speech, growth ideologies, and cultural permissions. It is telling that only China has, thus far, developed detailed synthetic media regulation with their ‘Provisions on the Administration of Deep Synthesis of Internet-based Information Services’. Building on pre-existing, rigorous digital communications and media controls, China’s speed and attention to detail in regulating a synthesising media ecosystem could be admirable - if it wasn’t also anti-democratic in its intent.

Under the watchful eyes of their critics and concerned members of the public, Big AI has been proactive in shaping emerging and future regulation. Their leaders are making overt gestures to work with governments and law makers, publishing think pieces on governance / oversight frameworks, and reassuring the public in unusually mainstream forums (and business leaders on their turf). Armies of in-house policy experts in Big AI companies are diligently second-guessing potential future regulation and guiding the implementation of mitigation and transparency tactics. These include watermarking (on offer from most Big AI players at this stage), letting creators opt out of (future) model training sets, and creating or joining ‘best practice’ industry bodies. The EU’s Digital Services Act has already demanded social networks include a feature that enables European users to switch off algorithmically curated content - a use case around keeping streams free of synthetic content is an obvious next step if the commercial implications are palatable.

Addressing more specific threats to the media ecosystem is the focus of new pressure groups, watchdogs, non-profits, specialist think tanks, and innovators (e.g. Witness, Reality Defender, Fairly Trained). They provide evidence-based research on core issues benefiting regulatory discourse and campaigns designed to shape public opinion, tangible interventions that benefit creators and consumers alike (e.g. badging AI-free content, as the activist-driven Credo 23 aims to do), and early attempts at certifying ‘fair’ training data usage. The Partnership on AI (PAI) comes closest to plugging regulatory gaps with its framework for responsible synthetic media practices aimed at tool builders, their users (i.e. creators), and content distributors (outlets and platforms).

However, in addition to being voluntary, PAI’s framework doesn’t consider the impact the consumption of synthetic media can have on its consumers’ senses and minds deeply enough to give way to more comprehensive consumer protections. An ideal next step - in regulatory scope, internal governance and outside pressure - would include media psychology / neuroscience guidance while addressing issues surrounding digital media’s distribution infrastructure and the responsibility of generative AI tool developers.

Sociocultural Currents

Dominant narratives propagated by the tech industry, its critics and its cheerleaders - techno-optimistic 'boon', de-accelerationist 'future doom,' and ‘concerned about the present’ pragmatism - continue influencing sociocultural discourse and its resulting currents.

Swathes of news media favour emotionally charged problems that epitomise the gravest damages generative AI could cause (while conveniently benefiting from this sticky narrative).

Cultural resistance is emerging, catalysed by the loud voices of those whose creative livelihoods have been threatened by theft and looming redundancy. Some critics and their like-minded adherents even view synthetic media as a continuation of the algorithmic blandification of media. Growing consumer groups who find these developments unjust or unfortunate may, therefore, intentionally choose to avoid synthetic media and opt instead for the content equivalent of organic food or sustainable fashion.

Enthusiasm and celebration are also on display. Many newly created Generative AI fan accounts distribute news about the latest tools and creative outputs for curious and excited followers. Communities and fandoms are emerging around new genres of synthetic media, often created by new breeds of creators (see ‘prompt archetypes’ in the previous post). Income hacks around creating user-generated content with generative AI tools - designed and distributed by a new breed of infopreneurial influencers to side-hustlers - promise to prop up existences in a challenging economic climate. (Was this what was meant by ‘prosperity for all’?)

The polarisation of views and relentless debate risks distract from and delay urgently needed alignment across ideological divides. This moment requires deep, varied and nuanced debate to navigate issues as they emerge, not division. Just like in relation to many other matters in our world, there is no clear map to guide us towards union and open-mindedness. It is, however, a testament to the power of pop culture that the sociocultural currents it is able to shape can also manifest in action. In addition to benefitting local economies, providing exceptional labour conditions, and generating $1bn+ off the back of an ongoing world tour, Taylor Swift also managed to get the White House’s attention and commitment to action following her deepfake debacle.

Consumer Adoption

While generative AI tool adoption statistics paint a picture of growing mainstream usage, there needs to be more evidence revealing how much of our media diets have been synthesised and what we feel about it. A clear understanding of voluntary adoption and its drivers is also outstanding. Breaking down the factors that have influenced the shape of media diets to date can at least help us glean how it could play out.

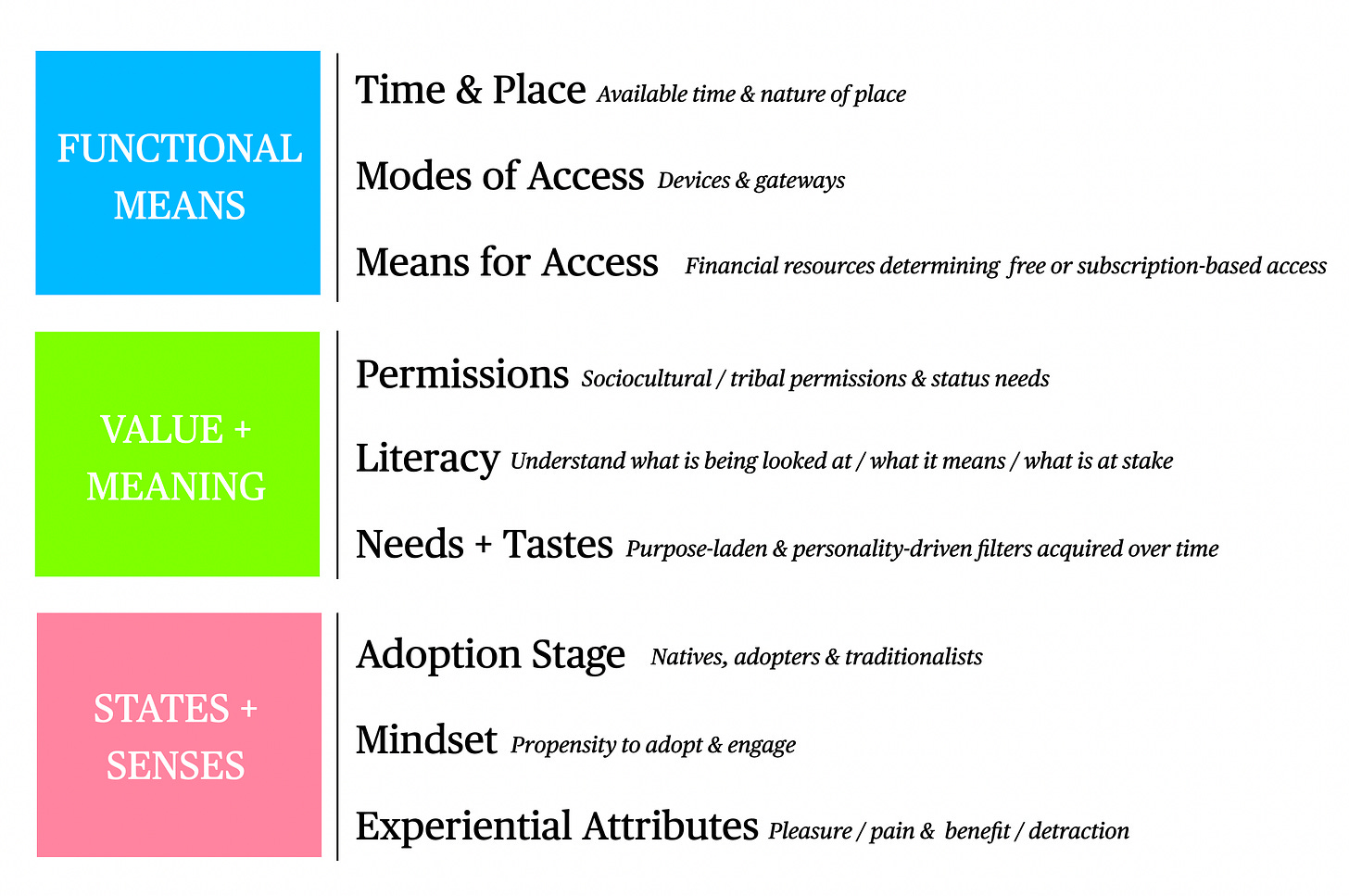

The determinants of media diets can be clustered around functional means of access (time / place, devices, and financial resources), value and meaning desired from and attributed to content (social permissions, literacy levels, needs / tastes), as well as states and senses (adoption stage, mindset, experiential affect).

I won’t expand on each of the elements in detail, but I do want to look at one critical determinant that will be impacted by a synthesised media landscape inextricably linked to commercial conditions: financial means.

When considering how means of access shape media diets, the role of financial means in relation to food diets is a helpful analogy. Food, which is most beneficial to its consumer’s biological health, is (typically) organically produced (thereby free of toxins like pesticides and herbicides), locally grown and distributed, and sustainably farmed to ensure the integrity of the environmental system involved. The intensity of labour and cost of resources are high and consequentially passed on to the consumer to maintain the process.

At the other end of the extreme, ultra-processed food (which arguably exists in an entirely different, lab-based ecosystem - though not without leaving its marks on the natural one) is engineered - with the help of synthetic molecules - to be cheap, abundant, and accessible anywhere and all year round. Regrettably, the true cost of ultra-processed food is not reflected in its low price; instead, consumers pay with their health, facing biological and neurological harm such as obesity, diabetes, weakened immune systems, and depression.

Premium media tends to be values-led and / or driven by a creative vision, making it akin to artisanal or organic food or - in the context of synthetic matter - like a biodegradable. Low-cost or free media’s priority is to get consumed in volume and is therefore tuned to ensure it does, mirroring ultra-processed foods and POPs (persistent organic pollutants). As we’ll see shortly in the ‘Business Practices’ section, the proliferation of synthetic content has been far greater in the free or low-cost media space than in the premium one to date.

It is still early for adoption - consumer experiences are infrequent (but rising), novelty drives curiosity, and education (let alone literacy) is still limited (the odd pop culture sensation / debacle and reciprocal narrative can mainly take credit for what is in place). As synthetic experiences grow in volume and increase in ubiquity (en route to becoming a new normal), segments will splinter, new needs will emerge, attitudes will shift, and behaviours will change. Yet financial means, I believe, will remain at the heart of driving many decisions.

Furthermore, a fragmented channel and gateway landscape atop abundant, precision-curated content libraries have already contributed to the highly individualistic nature of media diets. Paired with the other determinants above, no two diets are the same. A plausible shift towards chatbot interfaces through which media is discovered and consumed will likely perpetuate this trend in one direction.

Another may entail diets that discriminate against one form of synthetic content but embrace another - influenced heavily by adoption stage, mindset and experiential affect. You might reject 2Pac’s version of Dancing Queen, but be fine with a personal news anchor avatar chatting to you through your earbuds. Or watch AI Housewives of [Your Choice], but refuse to follow synthfluencers for health-related information.

The ability to anticipate synthetic media adoption relies on understanding the nuances and complexities that inform the media diets of those who might consume it. Diet determinants will, therefore, be a helpful framework for creators and tool innovators to consider as they define their audiences or users - and what their ideal terms of engagement look like in the event of interest.

Business Practices

We previously established how various problems drive the media industry’s rapid adoption of savings- and efficiency-promising generative AI. The appeal of the technology for its potential to drive immediate and vast commercial gain (either through radical savings from workforce cuts or new, monetisable content experiences) has already made itself known through various statements of intent from industry leaders and actions taken by media businesses.

A splintering of positions in the media industries reflects the aforementioned socio-cultural ones to a degree, as well as the structural erosion of the market.

Producers of ‘premium’ media (predominantly sitting behind paywalls or top-of-range subscription fees) overwhelmingly declared hesitant adoption and cautious, responsible experimentation (mainly around admin-heavy tasks and some research). Instead of surrendering as much work as possible to automation, they combine a cautious embrace of assistive tools for research and analysis while doubling-down on their values and ‘artisanal’ skills, such as embodied research and storytelling, building deep knowledge, and sharply executed analysis.

Those operating low-cost or free high-volume media outlets and platforms are likelier to stick to making their winning formulas work harder. They will resort to ‘AI-Enhanced’ (fast to make and get out, possibly engineered to ensure maximum engagement), ‘Dupes’ (cheaper than the real thing), and ‘McContent’ (inexpensive and easy to churn out copiously, with desirable profit margins). Furthermore, to ensure they hit their consumers’ brains’ pleasure centers, gossip, fascinations, drama, and spectacle will prevail, presented in familiar formulas.

Suppose these developments accelerate against a backdrop of a collapsing Internet, a dying middle media market, and the emergence of ‘luxury media’. The result is a likely dominance of synthetic media that carries the hallmarks of being ultra-processed, or - as per the last post’s analogy - POPs.

Those priced out of premium or luxury media have no choice but to consume what is free or cheap. By default, this media is linked to data-hungry and attention-greedy advertising or increasingly fragile subscriber-dependent models, distributing content designed by and for optimum algorithmic performance. In other words, it is not made with the intention to benefit its consumers.

The aforementioned business practices demonstrate how incremental changes to existing outlets and platforms could play out based on existing signals gleaned from the media industry’s public moves and experiments. It also relies on media producers remaining in control of their fates as they go on to benefit from savings and efficiencies in their production process.

In anticipating future business practices with generative AI, there is a potential curveball to consider in the form of chatbot interfaces and robotics. Chatbots will either continue to sit behind screens or become embodied in a new genre of devices reliant on commands, physical actions, dialogue and (maybe) projections (e.g. Humane’s AI Pin, whatever Jony Ive does with OpenAI’s brief, Rabbit R1, an agent of choice accessible via AirPods, an Alexa app - unless Alexa 2.0 can fulfil the requirements, a personal robot, etc).

Suppose chatbot builders’ visions were to dictate innovation in this space. In that case, searching or browsing on owned platforms might be rendered obsolete should consumers invite chatbots to curate their media for them. This requires rethinking a media business’s entire value chains (particularly all distribution aspects) and business models and may see emergent platforms built for purpose trump legacy businesses’ efforts.

Will we have one or a couple of chatbots as gateways and curators for the media we consume? Will we turn to our media brands’ chatbots on their owned platforms or to new ones? Will they be in dialogue with us, or will our digital twin have to decide what its real-world version may care to spend time with? Who will be designing new and legacy systems? What incentivises their creation, and how does it manifest in their intent? What data is required to make a chatbot-driven media diet fruitful - and how will it be shared, monetised, and protected? Will habits change - is this solving a genuine problem or serving a deep need? Furthermore, which medium (text, image, audio, video) will dominate in a conversation-driven environment?

Ironically, one benefit of a chatbot-driven media delivery system may be that consumers are confronted with fewer choices by default. This could reduce overwhelm and increase relevance while also enforcing a reduction of the unsustainable volume and frequency of production across the industry. It might even make diets safer unless the bot doesn’t happen to be powered by a dogma-driven or sleeper agents-infiltrated foundation model.

It could, however, also make consumers’ worlds very small and lonely if those building the systems don’t consider drivers of wellbeing and social stability in their processes.

NEXT: What are the consequences of these emerging impacts? What is at stake?