Sustainable Synthetics & Inoculated Minds | SITUATION & POSSIBILITY

1/5 What are the possibilities enabled by generative AI?

Situation

As generative AI redefines content production, distribution, and consumption, it also gives rise to entirely new media experiences, creators and platforms. Consequentially, the business imperative for its swift adoption across the media industries (information, social and entertainment) is palpable.

All three aforementioned media verticals suffer from a version of diminishing returns, cost-cutting, production pressures, mindshare wars, personnel shortages, declining advertising efficiencies, and / or promiscuous, fleeing audiences. Unsurprisingly, the prospect of heightened efficiency, savings, and process optimisations - all at vast scale and speed, underpinned with the promise of even greater engagement via next-level personalisation - is alluring. From large businesses to swathes of amateur creators, avid experimentation is underway to find new use cases and ownable content innovations.

Meanwhile, culture and society's reception of tools and AI-generated media has been predictably polarised.

Skilled leaders snubbed text tools for being too ‘average’ and unreliable, while less-skilled aspirants valued the leg-up it gave them in their day jobs. Prompt-fluent infopreneurs were quick to help their audiences how-to their way to speedy riches - yet the subsequent flooding of content platforms and marketplaces from Amazon to Etsy reified an emerging sentiment that AI-generated content equals low-quality opportunism (“shamming, spamming, and scamming”, Ted Gioia, 2023).

To trained eyes, generative art may be an “expensive screensaver” (Jerry Saltz, 2023, in response to Refik Anadol’s MoMA moment) - for others, it offers a moment of respite in a museum they may not have entered without the lure of a soothing alien spectacle. While hard-working actors picketed to protect their likeness and incomes, some of their demi-god peers inked deals with companies like Hyperreal to synthesise and monetise theirs. One of Buzzfeed’s many AI bots will coach its readers (users?) to become influencers. Meanwhile, Snap’s was bullied and trolled.

The question ‘Did AI make that?’ entered entertainment critics’ vocabulary as a proxy for human-made duds, offering a humorous salve amidst endless uncertainty felt by creative classes. Yet for discerning writers (like those scripting the show Mr Robot, for example), writing assistants’ propensity for the evident and bland presented an opportunity. Using them to generate plot lines, they could identify the most obvious path, only to deliberately do the opposite of what had been assembled for them. In effect, they reverse-engineered mediocrity into quality.

So far, an ongoing period of experimentation has overwhelmingly yielded the media equivalent of background noise and viral novelties with short shelf lives. However, it is worth noting background content is “played for long hours and [earns] big returns under the payment model used by streamers” (Economist, 2023), while novelties can generate many millions of monetisable views.

Lensa profile pics may have come and gone, Ghostwriter977 may not have been allowed to chart, Grimes may not have published an ROI on rev-sharing her voice, Socrates and Bill Gates didn’t get a podcast deal following their pilot, and Deep Fake Neighbour Wars didn’t get renewed – at least not yet.

Could they have sown seeds that eventually give rise to meaningful and enduring experiences, exceeding expectations of quality that even the most critical judges can’t refute? Or will fillers and gimmicks prevail?

Generative AI Possibility

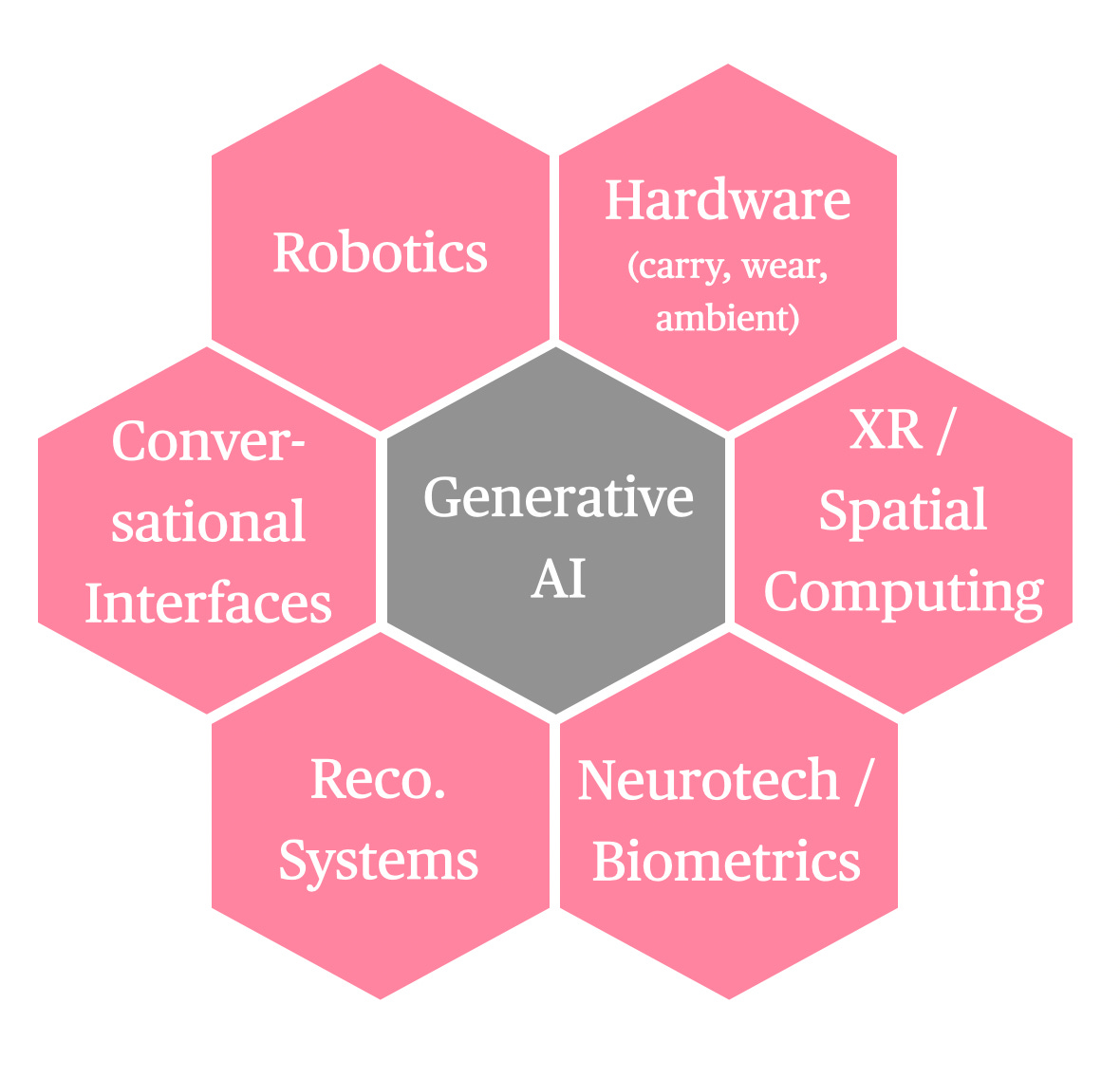

Across all typologies of synthetic content, combinatorial innovations pairing generative AI with other technologies are pivotal in shaping synthetic media experiences. These include:

Generative AI services are set to become screenless (e.g. Jony Ive x OpenAI partnership), ambient (e.g. Humane’s AI Pin), spatial overlays (AR) or presences (VR), and mechanically embodied (robotics). This, in turn, perpetuates shifts in how media content is distributed and experienced. Interactions that were once mainly surface-level (literally, behind glass, and figuratively, predominantly one-way) are becoming more immersive and intimate through dialogue, the technology’s preferred mode of engagement.

While the previous iteration of algorithmic personalisation was predominantly based on inferences from past behaviours (clicking, reading, scrolling), dialogue-driven interactions (intentional or otherwise) make for a deep knowledge of a user’s past and present interests, needs, and mindset. Together with mood states (tracked by wearables, e.g. Google via Fitbit, Apple via its Health app), all these data sets are poised to inform media creation and targeting. An eventual ability to inhabit and physically move around a synthetic media experience will also provide whatever device will be worn in this instance with an even more comprehensive range of physical and emotional cues to interpret and respond to in real time.

When we pair this assessment of combinatorial innovations with an analysis of emerging and speculative examples of synthetic media, we can start to map the types of content experiences set to manifest across the information space, in social media and entertainment. We can also begin to segment the creators responsible for making them, how existing platforms distributing these new types of content will adapt, and what purpose-built new ones may emerge.

It goes without saying that generative AI, like most technologies, builds on and accelerates established conditions and mechanisms (e.g. technology as an extension of a creative individual, designing content for mood support, accessible digital editing tools, pile-high-sell-cheap, propaganda, etc). It is fair to challenge whether anything is genuinely new or how what may come next differs from what is or was.

Yet, we can only identify and scrutinise the technology’s impact on the media ecosystem and consumers when we map emerging and anticipated changes. And this is a vital undertaking if we are inclined to steer it towards benefit and away from harm.

1. Typologies of Synthetic Content

Interplay

A raft of creatives are already embracing generative AI as an extension of themselves and their artistic practices. They are intelligently and intentionally using the technology to explore new frontiers of their capabilities and the genres in which they operate, inviting audiences to interact, ask meaningful questions (particularly about the nature of reality) and / or experience emotional resonance through immersion.

Of all the typologies we will explore, Interplay has the potential to positively contribute to the ecosystem once it makes its way out of media arts (where most Interplay signals can be found to date) and into information, social media and entertainment.

Experiences like UVA’s Chromatic (2022), a “software-driven optical instrument”, give a glimpse of new kinds of audio-visual music experiences, while TeamLab’s Sketch Aquarium (2021) reimagines the mechanics of co-created storytelling (animating static drawings in an immersive environment). Artist Marguerite Humeau updated Adam Kossowski’s mosaic The History of the Old Kent Road (1965), utilising GPT3 “to create a new, post-apocalyptic vision of the city (Artnet, 2023) - thereby demonstrating how projective world-building based on existing ideas could translate into a new use case in the information space.

Interplay content is typically labour-intensive and craft-driven, propelled by the creator’s intention to make something of value that moves their genre on or creates an entirely new one instead of disposable and fast for the sake of swift returns. It is less about abdicating work or cheating (a trope frequently thrown at new creatives using new technologies) and often entails custom-built soft- and / or hardware solutions built by their creators (e.g. Sougwen Chung’s DOUGS - Drawing Operations Unit, Generation Four).

Neural Shifters

Building on the age-old behaviour of intentionally choosing media to shift or enhance a mood, generative AI is set to remove friction from the search process and enhance the desired effect. Through bot-driven dialogue or in tandem with neurotech wearables responding to biofeedback, Neural Shifters move their user’s mood state towards a predetermined measure of success (e.g. from angsty to relaxed or lethargic to stimulated).

Innovations in the ‘functional music’ genre (15bn monthly streams worldwide), such as AI-powered ‘Soundscape’ service Endel (boasting a $15m Series B investment), explicitly design soundscapes to enhance listener wellness, while Bedtimestory.ai support the end-of-day wind-down with soothing, custom-generated stories.

Fiction and information could also adapt narrative arcs according to emotional tolerance or preference through intuition (i.e. decided by a machine) or intention (i.e. determined by the user) - something the experimental generative AI-powered app Boring Reports offers a glimpse of with its ability to neutralise sensational news headlines and stories.

AI-Enhanced

AI-Enhanced spans existing or familiar content experiences augmented in form and potency, produced at speed with minimal effort or resources. It also includes bringing physically impossible characters, objects, ideas and worlds to life and extending an experience beyond its original medium.

Dub a podcast recorded in one language into multiple ones, rapidly scaling its audience and opening up hundreds of new revenue streams. Turn a personal photo into a GIF or short film, give or take an expanded landscape or cast of characters. Generate visualisations for articles. Increase the wit or production speed of a meme seeking to connect with others over a zeitgeisty theme, accelerating the release of a guaranteed-to-be-relatable creation into social streams. Conjure the perfect sticker, filter, response, and background for a social post or chat message. Turn long-form writing into short summaries and / or audio - read or sung by a voice of the creator’s and audience’s choice.

Tools facilitating AI-Enhanced content also give rise to new genres and creator behaviours. For example, bots are starting to emerge alongside books, enabling readers to ‘talk’ to them (as economist Tyler Cowen recently demonstrated), opening up a new genre of publishing. In terms of behaviours, ChatGPT x DALL.E 3’s ability to conjure speculative multiverses has captured many creators’ imagination (and social media by storm) courtesy of their novelty, absurdity and hilarity (e.g. the angriest of angry marshmallows, depicting a civilisation run by squirrels or the most extreme iteration of a national stereotype). Technically, no ‘what if…?’ ever needs to go unanswered again.

Dupes

Dupes will assume the form or style of someone or something already in existence. Their synthetic nature will ensure they travel far and wide without the constraints of physics or financial means.

There are three kinds of Dupes: unsanctioned counterfeits (e.g. deepfake Tom Cruise), sanctioned entities (e.g. ABBA Voyage, Elf.Tech by Grimes, Holly+) or someone / something seemingly familiar yet synthetised from the ground up (e.g. Copy, an AI fashion magazine, which generated its entire content - including clothes and models with a skeleton staff using many generative AI tools).

Dupes-facilitation tools could allow anyone who felt so inclined to conjure their own entertainment experience (e.g. Fable’s Showrunner AI, which lets users prompt custom South Park episodes) or create music using voices in the style of living and deceased artists (e.g. SIXFOOT 5 mimicking Adele’s vocals for their own compositions). A celebrity voice of choice can read out study notes during exam preparations, recipes while cooking, and DIY instructions, injecting fun into otherwise mundane everyday tasks. Perhaps some synthetic housewives (possibly even in an actual or imagined city of the audience’s choice) are on the horizon, too.

Interaction with characters is no longer just one-way either - multiple incarnations of synthetic friends (e.g. Replika AI, DreamGF) promise companionship and emotional support. Synthetic influencers (e.g. Afterparty and 1337, whose ‘entities’ can exist on multiple social and streaming platforms) are becoming new objects of fandom, while other forms of avatars can be vessels for distributing all sorts of information (as demonstrated by AI-first news platform Channel 1 and the growing popularity of social infomercial hosts).

McContent

Like the ultra-processed food that inspired this typology’s name, McContent spans films, TV shows, posts, songs, and news stories engineered to fill space or satisfy a craving for something tasty or easy over and above offering anything of ‘nutritional’ value (we’ll explore this analogy in greater detail in the post concerning itself with media and the mind).

One strand of McContent spans benign filler or background content (advertising, audio-visual media, stock photos) - akin to empty calories, serving a low-grade commercial or personal purpose, filling empty space and optimised to appease search engines.

Another strand involves engineering content with a predilection for hot-button issues, feuds, gossip, ego-stroking or nostalgia to guarantee stand-out and traction (especially if fine-tuned to the palette of the individual watching it). Irresistibly packaged and delicious for a moment upon ingestion, one content nugget will swiftly replace another, collectively engineered for trance-like consumption in endless ad-supported streams (e.g. quizzes, synthetic horoscopes, a slideshow of the coronation afterparty BTS, synthetic thirst traps).

Nerve Agents

Nerve Agents are the equivalents of toxins in the media ecosystem - think mutant mis- and disinformation. They are either deliberately constructed to cause maximum psychological damage or accidentally conjured as a result of creator naivety or a machine hallucination. Either way, they affect unsuspecting consumers and can even harm unwitting institutions economically.

Countercloud is an early frontrunner in fully synthetic disinformation-facilitating tools ($400 to access), while the fake image of a burning Pentagon briefly shook the stock market. Even if very obviously unreal or disclosed as synthetic, Nerve Agents can still seed powerful ideas in the population’s collective imagination: a synthetic, six-fingered Trump being arrested riled his base while also satisfying his opponents.

Combinatorial Typologies

The typologies of synthetic media we just explored mirror their past and present counterparts in that they, too, are impossible to keep in tight boxes. Overlaps and combinations are inevitable.

An Interplay experience might, in fact, feature meticulously crafted and sanctioned Dupes (e.g. the style of the artist using them or the physical form of a collaborator). Nerve Agents could be disguised as McContent, engineered to behave like a Neural Shifter. McContent could widen its reach by including aspects of AI Enhanced - as in, a text could also be an audio clip or a video translated into every language.

The intersection of Dupes and McContent is particularly lucrative - the so-called digital human economy is expected to reach $125 billion by 2035 (Gartner, 2023). Cue infinite synthetic humans pumping out infinite (and sponsored) unboxings, get ready with me’s, what I eat in a day’s, and travel vlogs.

Moreover, some typologies may collapse while new ones emerge, and additional subcategories will evolve over time, too. The map should, therefore, be treated as open and flexible.

2. Prompt Archetypes

Synthetic media creators, or Prompt Archetypes, can roughly be split into those with prerequisite skills (i.e. individuals who have studiously attained creative mastery in digital creativity) and those who are learning by doing. Sophistication, as the results of our collective experimental efforts have proven so far, is perhaps not as swift to come by as Big AI’s promise of democratisation has suggested. Quality and meaning still require a degree of skill rather than mere assemblage.

Three types of intention will drive Prompt Archetypes: making a valuable contribution to the ecosystem, extracting value from it, or outright destroying it through manipulation, attacks or distortion. Some archetypes may also have dual intentions (as the map tries to capture via ‘shadows’), reflecting that expression and commercial gain are rarely mutually exclusive in the digital media realm.

Contributors include Wizards, Crafters and Mood Engineers (although these could have a destructive shadow as well), extractors span Creatively-inclined Novices, Big (Synthetic) Media, Fluents (who can all have shadows of contributors), and Grifters. Destroyers can manifest as Trolls, Psy-Ops, Hackers, and Terrorists.

3. Distribution Platforms

New experiences will either give rise to new fit-for-purpose content platforms, demand significant adaptation of existing ones, or slot into existing ones.

Interplay experiences could live in both existing physical and digital spaces. However, there is a strong chance that new ones will emerge (online and IRL) to meet their hosting, distribution, and audience engagement needs. TeamLab’s designated space PLANETS comes to mind. Disney’s IRL robots present an entirely new way of experiencing a story through dialogue and physically roaming around together - currently in a custom-built site inside theme parks, but eventually in the home or garden.

Given their reliance on specific inputs (e.g. biometrics) for optimal function, Neural Shifters will also benefit from purpose-built gateways connected to sensor-laden devices. Most come in the form of stand-alone apps, at least until Spotify et al. embrace curation via biofeedback.

Dupes that fall under the rubric of companionship benefit from bespoke platforms, where facilitating multi-model dialogue will play a key role in interacting with them (as the previously cited examples of Afterparty and DreamGF demonstrate). Until then, they will turn social networks dedicated to connections with other people into partially synthetic ones - as shown by Meta’s push into entertainment with celebrity-infused character bots. As the natural dialogue capabilities of bots evolve, new or existing speaker-centric devices may come into play, too.

Meanwhile, sanctioned imitations, like Scott Galloway’s Prof G AI, are currently standalone sites. Dupes that fall under counterfeits or unsanctioned imitations, alongside AI-Enhanced, McContent and Nerve Agents, will predominantly exist across legacy social platforms in the social entertainment space. Said social platforms are already starting to adapt to their existence by creating their own versions or facilitating them via free creation tools while also trying to caveat them with clear labels.

Many signals suggest that the most prolific emergent platform, cutting across all six synthetic content typologies, will revolve around a chatbot or conversational interface. Trained to develop an intimate understanding of their users’ predilections, needs and tastes, chatbots are a natural interface for intelligently curating (at worst, starkly limiting) access to content, especially in an over-abundant environment. They are also the spine of any AI-driven avatar experience across the media ecosystem (information, social media, entertainment), able to assume the role of a personal newscaster, synthetic friend, or storyteller - in a virtual / physical form, and with a personality to match what is desirable to their audience-of-one.

NEXT: SYNTHETIC MEDIA ECOLOGY - How will these emerging content experiences affect the media ecosystem?

I really really like the codification of typology and archetypes. Going to load that onto the memory card in the janky time machine.